Note

This page provides a public overview of the architecture of the flow ecosystem. I’ll try to keep it up to date to reflects flow’s current design and evolving vision.

Last updated: September 9, 2025 | flow v1.1.0

Flow is a YAML-driven task runner and workflow automation tool designed around composable executables, built-in secret management, and cross-project automation. This document covers the technical architecture and implementation details.

Design Philosophy

- Developer-Centric: Flow should be designed with developers in mind, providing a powerful yet intuitive interface for managing automation tasks.

- Composable: Flow should allow users to compose complex workflows from simple, reusable executables, enabling cross-project automation and collaboration.

- Discoverable: Flow should make it easy to find and run executables across multiple projects and workspaces, with a focus on discoverability and usability.

- Local-first: Flow should be able to be run entirely on a local machine, with no external dependencies or cloud services required.

- Malleable: Flow should be easily extensible and customizable, allowing users to adapt it to their specific needs and use it in a variety of contexts.

System Overview

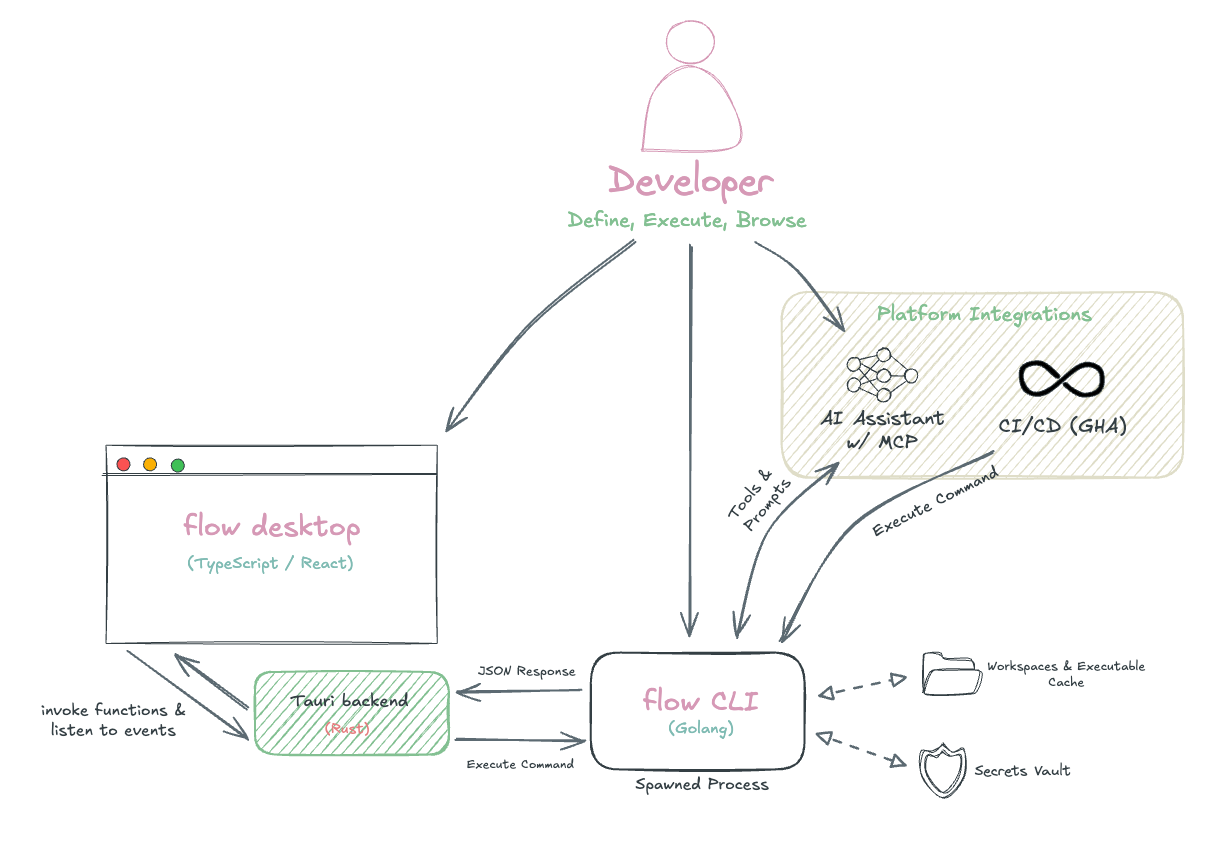

Flow’s architecture centers on a CLI-first design where the Go-based CLI engine handles all business logic, with other interfaces acting as presentation or integration layers.

The desktop app and other interfaces communicate with the CLI through process execution, with each user action spawning a discrete CLI command. Because of this, it’s important that CLI operations are fast and efficient, which is achieved through caching and optimized data integrations.

Technical Stack

Core Languages

- Go - CLI engine, business logic, and execution runtime

- TypeScript/React - Desktop frontend with type-safe CLI communication

- Rust - Desktop backend via Tauri framework

User Interface

- Terminal: Bubble Tea with custom tuikit component library

- Desktop: Tauri + Mantine UI for VSCode-like interface

- CLI: Cobra for command structure and auto-completion

Core Libraries

- YAML Processing: yaml.v3 for YAML parsing and serialization

- Process Management: mvdan/sh for shell execution

- Expression Engine: Go’s

text/template+ Expr for conditional logic and templating - Markdown Rendering: Glamour and react-markdown for auto-generated documentation UI viewers

Development Tools

- Type Generation: go-jsonschema, typify, and json-schema-to-typescript for cross-language code generation

- Go Testing: Ginkgo + Gomega for BDD-style tests and GoMock for interface testing

- Build & Release: Flow Execute GitHub Action and GoReleaser for cross-platform CLI distribution

Organizational Model

Flow’s organizational system creates a hierarchical structure that scales from individual projects to complex multi-project ecosystems. The system balances discoverability with isolation, enabling both focused work within projects and cross-project composition.

Requirements

Hierarchy Structure

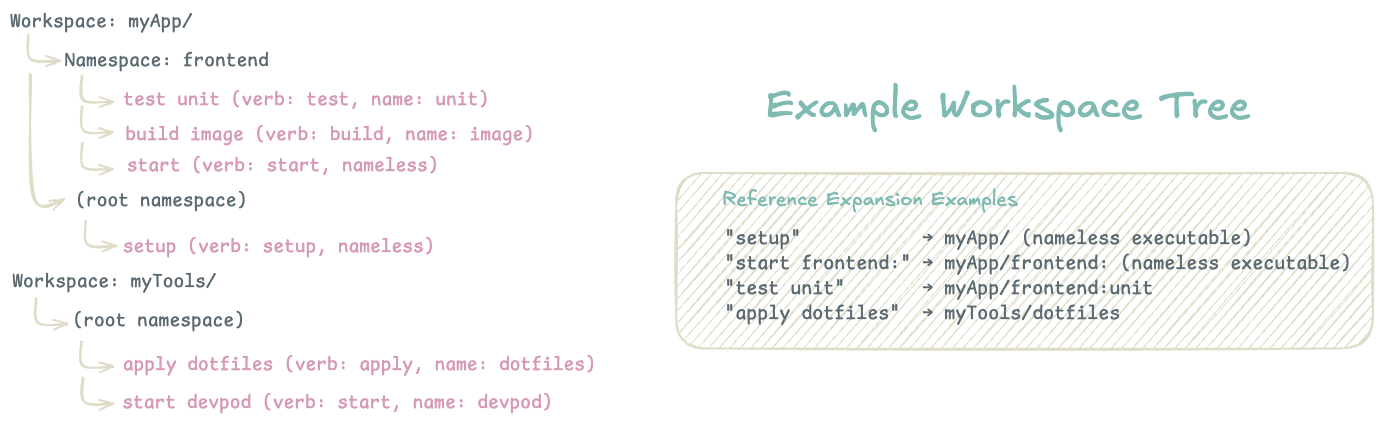

Workspaces serve as the top-level organizational unit, typically mapping to Git repositories or major project boundaries. Each workspace contains its own configuration, executable discovery rules, and isolated namespace hierarchy.

Namespaces provide logical grouping within workspaces, similar to packages in programming languages. They enable organizational flexibility - a single workspace might have namespaces for frontend, backend, deploy, or tools. Namespaces are optional but recommended for workspaces with many executables.

Executables are the atomic units of automation, uniquely identified within their namespace by their name and verb combination. This allows multiple executables with the same name but different purposes (build api vs deploy api).

Reference System

Flow uses a URI-like reference system for executable identification:

workspace/namespace:name

│ │ │

│ │ └─ Executable name (Optional but unique within verb group + namespace)

│ └───────── Optional namespace grouping

└─────────────────── Workspace boundary

Reference Resolution Rules:

my-task→ Current workspace, current namespace, name=“my-task”backend:api→ Current workspace, namespace=“backend”, name=“api”project/deploy:prod→ workspace=“project”, namespace=“deploy”, name=“prod”project/→ workspace=“project”, no namespace, nameless executable

Reference Format Trade-offs:

- Chosen: Slightly more verbose for simple cases

- Avoided: Naming collisions, poor tooling support, brittle file/directory coupling

Verb System

Verbs describe the action an executable performs while enabling natural language interaction. Verbs can be organized into semantic groups with aliases:

# Executable definition

verb: build

verbAliases: [compile, package, bundle]

name: my-app

# With the above, all of these commands are equivalent:

flow build my-app

flow compile my-app

flow package my-app

This system allows developers to use whichever verb feels most natural while maintaining executable uniqueness through the [verb group + name] constraint.

Default Verb Groups:

I’ve decided to significantly reduce the number of default verb groups to focus on the most common actions with the most semantic clarity. See the flow documentation for the latest default list.

Context Awareness

Flow maintains context awareness to reduce typing and improve ergonomics:

Current Workspace Resolution:

- Dynamic Mode: Automatically detects workspace based on current directory

- Fixed Mode: Uses explicitly set workspace regardless of location

Namespace Scoping:

- Commands inherit current namespace setting

- Explicit namespace references override current context

Note to self: Explicit command overrides of workspace / namespace may become an emerging need with the Desktop UI and MCP server usage.

Discovery Rules: Each workspace can configure which directories to include or exclude from executable discovery, enabling fine-grained control over what Flow considers part of the automation ecosystem.

Cross-Project Composition

The reference system enables powerful cross-project workflows:

executables:

- verb: deploy

name: full-stack

serial:

execs:

- ref: "build frontend/" # Different workspace

- ref: "build backend:api" # Different namespace

- ref: "deploy" # Current context

This allows building complex automation that spans multiple repositories while maintaining clear boundaries and dependencies.

Execution Engine

The execution engine is the core of Flow, responsible for running executables defined in YAML files.

Runner Interface

The execution system uses a runner interface pattern where each executable type implements:

type Runner interface {

Name() string

Exec(

ctx context.Context, // execution context

exec *executable.Executable, // executable to run

eng engine.Engine, // optional engine for execution

inputEnv map[string]string, // environment variables for execution

inputArgs []string, // command line arguments

) error

IsCompatible(executable *executable.Executable) bool

}

Current runner implementations include:

- Exec Runner: Shell command execution

- Request Runner: HTTP request handling

- Launch Runner: Application/URI launching

- Render Runner: Markdown rendering

- Serial Runner: Sequential execution of multiple executables

- Parallel Runner: Concurrent execution with resource limits

Exec Type Requirements: Request Type Requirements: Launch Type Requirements: Render Type Requirements: Serial and Parallel Requirements:Executable Runner Requirements

Workflows (Serial and Parallel)

The serial and parallel runners allow for composing complex workflows from simpler executables. You do this

by defining exec steps with a RefConfig:

type SerialRefConfig struct {

Cmd string // The command to execute. One of `cmd` or `ref` must be set.

Ref Ref // A reference to another executable. One of `cmd` or `ref` must be set.

Args []string // Arguments to pass to the executable.

If string // An expression that determines whether the executable should run

Retries int // The number of times to retry the executable if it fails.

ReviewRequired bool // Prompt the user to review the output of the executable before continuing.

}

// Parallel is very similar to Serial

type ParallelRefConfig struct {

Cmd string

Ref Ref

Args []string

If string

Retries int

}

Additionally, limiting the number of concurrent executions in parallel workflows is supported.

Execution and result handling is managed by the internal engine.Engine interface. The current implementation includes retry logic, error handling, and result aggregation.

Execution Environment and State

Environment Inheritance Hierarchy:

Environment variables are provided to the running executable in the following order:

- System environment variables (lowest priority)

- Dotenv files (

.env, workspace-specific) - Flow context variables (

FLOW_WORKSPACE_PATH,FLOW_NAMESPACE, etc.) - Executable

params(secrets, prompts, static values) - Executable

args(command-line arguments) - CLI

--paramoverrides (highest priority)

State Management

Currently there are two ways that state can be managed when composing workflows within a serial or parallel executable:

- Cache Store: Key-value persistence across executions with scoped lifetime—values set outside executables persist globally, while values set within executables are automatically cleaned up on completion. This store used go.etcd.io/bbolt for data storage across processes.

- Temporary Directories: Isolated temporary directories (

f:tmp) provide scratch space for executables with automatic cleanup, while supporting shared access across serial/parallel workflow steps.

This, of course, is in addition to the general usage of shared state from the secrets vault, environment variables, etc.

File System Access

By default, execution’s working directory is determined is the directory containing the flow file that defines the executable.

This can be configured per-executable using special prefixes like // (workspace root), ~/ (user home), f:tmp (temporary).

At this time, there is no automatic sandboxing—executables, they inherit full user permissions. Flow assumes users understand their workflows’ scope and potential for system modification, prioritizing automation flexibility over execution isolation. This limitation could be improved with containerized executions, a feature that hasn’t shipped yet.

See executable guide and state management for usage details.

Performance and Caching

Cache Hierarchy

Flow implements multi-level caching:

- Memory Cache: Recently accessed executables and workspace data

- Disk Cache: Workspace and executable file/directory mapping and template registry

- File System: Source of truth for all configuration

The cache is updated on demand with the flow sync command or --sync global argument.

It should only need to be updated if executable locations or identifiers change, such as when adding new executables or changing workspace directories.

There is some logic to resync if there is a cache miss, but this only happens for the exec commands.

Executable Discovery

Workspace scanning uses filepath.WalkDir with early termination for excluded directories. Exclusions and inclusions are defined in the workspace configuration file (flow.yaml).

The discovery process searches for all YAML files with the .flow, .flow.yaml, or .flow.yml extensions, and

parses them to build the executable registry.

During this process, expanding references for verb and name aliases is handled, allowing for quick lookups by verb and name combination in future operations.

Eager Discovery vs. Lazy Loading:

- Chosen: Eager discovery with caching

- Rationale: Quicker response time is better than lower memory usage

- Cost: Memory usage that scales with # of workspaces and executables Note to self: Some performance testing needed

- Benefit: Instant autocomplete, consistent performance

Imported / Generated Executables

To support the ease of onboarding workflow into the flow ecosystem, executables can be generated from a couple of common developer workflow files. The current implementation includes importing shell scripts, Makefile targets, package.json scripts, and common Docker Compose commands. Imported executables are treated identically to YAML-defined ones in the discovery system, appearing in the browse interface and supporting the same reference patterns.

In the current implementation, the “verb” and “name” of the executable is derived from the context of the imported workflow. For instance, shell script file names become the executable name with the default exec verb, a package.json script with a name like test would create a nameless executable with the test verb, etc. This does create more opportunities for executable duplicates if not done with care. The sync command must maintain clear warnings when this is the case.

For shell files and make targets, most executable metadata can be overwritten. For instance, including the following at the top of the

shell file or directly above a make target would create the update my-exec executable with a SECRET_VAR provided from the password secret on sync:

# f:name=my-exec f:verb=update

# f:params=secretRef:password:SECRET_VAR

# f:description="a description"

Also see the source for this parsing logic

Vault System

The vault system is a core component of Flow, providing secure storage, management, and retrieval of secrets across workspaces and executables. It extends the executable environment with multiple encryption backends.

Implementation: github.com/flowexec/vault

Provider Architecture

The vault system supports multiple storage backends, implemented through a common Provider interface:

type Provider interface {

ID() string

GetSecret(key string) (Secret, error)

SetSecret(key string, value Secret) error

DeleteSecret(key string) error

ListSecrets() ([]string, error)

HasSecret(key string) (bool, error)

Metadata() Metadata

Close() error

}

Current Providers

- Unencrypted Provider: Simple key-value store for development and testing

- AES Provider: Symmetric file encryption using AES-256-GCM sSingle key management)

- Age Provider: Asymmetric file encryption using the Age specification (supports multiple recipients)

- Keyring Provider: Uses system keyring (macOS Keychain, Linux Secret Service)

- External Provider: Integration with other external CLI tools (1Password, Bitwarden) via command execution

Vault Switching

Vaults can be switched using a context-based system:

flow vault switch development

flow secret set api-key "dev-value"

flow vault switch production

flow secret set api-key "prod-value"

Secret references support both current vault context (secretRef: "api-key") and explicit vault specification (secretRef: "production/api-key").

Template System

The templating system is supplemental to Flow’s core functionality, allowing users to define reusable templates for executables and workspaces. It uses Go’s text/template engine combined with the Expr expression language for dynamic content generation. The core implementation is in the github.com/jahvon/expression package.

The Expression library is used in all places where Flow allows templating or needs to evaluate expressions, such as in the if conditions of executables. The backing Expr language definition provides a powerful way evaluate and manipulate data for this use.

Template Processing

Define templates in YAML files with placeholders for dynamic content

form:

- key: "namespace"

prompt: "Target namespace?"

default: "default"

validate: "^[a-z][a-z0-9-]*$"

template: |

executables:

- verb: deploy

name: "{{ name }}"

exec:

cmd: kubectl apply -f deployment.yaml -n {{ form["namespace"] }}

Creation of executable definitions (flow files) is optional. The template system is flexible enough to allow for use for Flow contexts or setups completely unrelated to Flow, such as generating repos and files for new projects:

form:

- key: "namespace"

prompt: "Deployment namespace?"

default: "default"

- key: "image"

prompt: "Container image?"

required: true

- key: "replicas"

prompt: "Number of replicas?"

default: "3"

validate: "^[1-9][0-9]*$"

- key: "expose"

prompt: "Expose via LoadBalancer?"

type: "confirm"

artifacts:

- srcName: "k8s-deployment.yaml.tmpl"

dstName: "deployment.yaml"

asTemplate: true

- srcName: "k8s-service.yaml.tmpl"

dstName: "service.yaml"

asTemplate: true

if: form["expose"]

postRun:

- cmd: echo "Generated Kubernetes manifests"

- cmd: kubectl apply -f .

if: form["deploy"]

Terminal UI

The terminal UI is built using a couple of charm.sh libraries, including Bubble Tea and Glamour, to provide a rich, interactive experience for managing workspaces, executables, and vaults directly from the terminal.

Most views are defined in the tuikit package, with the exception of the browse/library view. These views are pending some refactoring to improve the user experience and make them more consistent with the rest of the UI and Desktop UI vision.

Desktop Application

The Desktop is not released yet, I’ll come back to update this section once I have a more complete implementation.

Process Communication

The desktop application uses Tauri (Rust + TypeScript) and communicates with the CLI through process execution. Each desktop operation corresponds to a CLI command, with JSON used for data exchange.

Why Tauri?

Tauri provides a lightweight, secure, and performant framework for building cross-platform applications with a web-based UI. Realistically, I decided to use it because it seemed like the most interesting choice at the time given my desire to learn Rust and build a desktop app without the bloat of Electron.

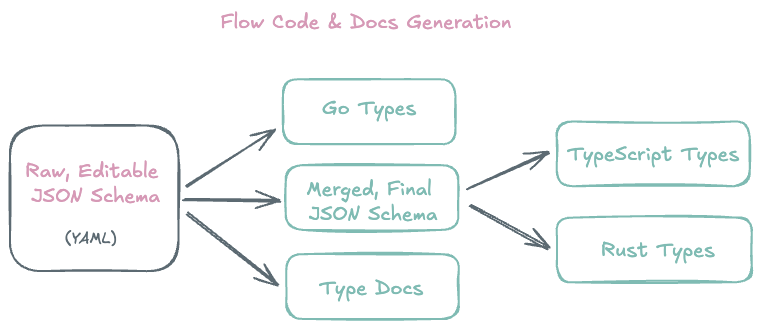

Type Generation

Type safety across Go, TypeScript, and Rust is maintained through code generation from a shared JSON schema. Schema changes automatically propagate to all three languages during the build process. The same schema is used to generate markdown pages for the documentation site.

This has been a huge quality-of-life improvement for development, as I can make changes in one place and have confidence that the types are consistent across the entire codebase. The schema also provides additional validation and documentation in IDEs with minimal effort.

Extensions / Integrations

GitHub Actions & CI/CD

Being able to run the same flow executables that you use locally in your CI/CD pipelines has always been a goal of Flow. To that end, a GitHub Action has been created and a Docker image is available for use in other CI/CD systems. All flowexec organization repositories should use this action!

Model Context Protocol

MCP server integration for AI assistant compatibility:

- Workspace browsing and executable discovery

- Natural language workflow generation

- Debug assistance with full context

Framework chosen: mcp-go

Limitations

While implementing, I wanted to implement MCP resources but support for them across clients is pretty low. In order to provide the best experience for the most clients, I decided to implement the MCP server with just Tools and Prompts.

There is a get_info tool that provides information to the AI about Flow, the current contexts, and expected schemas. This along with server instructions provided the best experience with AI assistants during my testing.

Known Limitations & Future Work

- WASM Plugin System: Allowing Flow to be extended with WebAssembly plugins for custom executable types and template generators.

- Note to self: I did a small POC of this but wasn’t very happy with the development experience on the plugin side. WASM may not be the right fit for Flow’s extensibility model, but I want to keep it open as a possibility.

- AI Runner: Integrating AI models to assist with workflow generation, debugging, and context-aware suggestions.

- Containerized Execution: Running executables in isolated containers for security and resource management.

- Current Technical Debt:

- No dependency graph analysis (circular reference detection)

Build System

Target platforms: macOS (Intel/Apple Silicon), Linux (x64/ARM)

The build pipeline needs to coordinate compilation across three languages:

- Type Generation: JSON schema → Go/TypeScript/Rust types

- Documentation Generation: Markdown documentation from JSON schema

- CLI Build: Go compilation with generated types

- Desktop Build: Tauri compilation with TypeScript types and CLI binary

- Distribution: Platform-specific packaging and code signing

For implementation details, see the repository and documentation.